Open PDF in Browser: Sunoo Park,* The Right to Vote Securely

American elections currently run on outdated and vulnerable technology. Computer science researchers have shown that voting machines and other election equipment used in many jurisdictions are plagued by serious security flaws, or even shipped with basic safeguards disabled. Making matters worse, it is unclear whether current law requires election authorities or companies to fix even the most egregious vulnerabilities in their systems, and whether voters have any recourse if they do not.

This Article argues that election law can, does, and should ensure that the right to vote is a right to vote securely. First, it argues that constitutional voting rights doctrines already prohibit election practices that fail to meet a bare minimum threshold of security. But the bare minimum is not enough to protect modern election infrastructure against sophisticated threats. This Article thus proposes new statutory measures to bolster election security beyond the constitutional baseline, with technical provisions designed to change the course of insecure election practices that have become regrettably commonplace, and to standardize best practices drawn from state-of-the-art research on election security.

Introduction

Imagine it’s a future Election Day. These days, voters can cast their votes during a quick lunch break—by simply filling out their ballot, folding the ballot into a paper airplane, and throwing it out of the nearest window. It’s raining today, but no matter: modern ballots are made of a waterproof, metallic material that withstands rain, snow, or even getting run over by a car. Poll workers are patrolling the streets every couple of hours to collect the paper airplanes strewn over the ground and take them to a secure location for tallying. Each registered voter can obtain a ballot on or in advance of Election Day, so everyone has plenty of time to inform themselves about the issues on the ballot.

Some voters are expressing their appreciation on social media, thrilled at how modern technology has streamlined the voting process—increasing turnout and saving everyone time. But many are pushing back. Some are wondering about the odds that their ballot will make it to the secure tallying location and doubting how much to trust the outcome of this election. Some are questioning the meaning of increased turnout when it comes at the cost of meaningful assurance that cast votes will be counted. Some are asking: Is an election system that affords such potential for widespread alteration and disappearance of ballots after casting even legal?

In fact, it is alarmingly unclear whether and to what extent the U.S. Constitution or other laws permit insecure election systems that allow widespread ballot alteration or disappearance after casting—even in egregious cases like the paper airplane story. It is also unclear whether regular voters (or anyone else) would have standing to challenge such insecure election practices in court. It would be unlawful for the election system or election officials themselves to tamper with ballots—but the legal analysis enters more uncharted waters if the election system “merely” leaves the door wide open to ballot tampering by third parties. The problem is exacerbated if there is uncertainty about whether such tampering actually took place on a specific occasion. Ironically, such evidentiary problems are most likely to arise in cases involving the most lax security: if a door is wide open and unmonitored, then naturally, it is very hard to tell whether unauthorized access or tampering occurred.

The paper airplane story is, of course, far-fetched; an election system with such readily apparent and gaping omissions in its ballot security measures would not be taken seriously. But real election systems can and do have serious security problems, too—problems that are harder to detect, harder to explain, and harder to understand. This only makes the legal status of realistic election systems more difficult to ascertain since, as noted above, the availability of legal recourse is not clear-cut even in the face of readily apparent and egregious security flaws.

The last major congressional effort at modernizing voting technology was the Help America Vote Act (HAVA) of 2002, which aimed in part to address security concerns raised by the closely contested presidential election of 2000.[1] HAVA’s well-meant provisions ultimately led to widespread adoption of “direct recording electronic” (DRE) voting technology[2] (often touchscreen machines), which was generally less secure than that which it replaced (often optical-scan or punch card ballots). Over the 2000s, extensive security research from many independent groups documented serious security vulnerabilities in these DRE machines.[3] Yet despite conclusive research findings, insightful reporting by journalists, and dedicated advocacy by many, reform has been slow and hard-won over years and achieved against vocal resistance.[4] Absent legal obligations on vendors or election officials to mitigate known, serious vulnerabilities in election equipment, and absent adequate funding and resources allocated to support such mitigation, well-documented security flaws in our election system have been gradually and arduously reduced, but not eliminated, over the course of well over a decade. This progress was in large part due to a move back towards basic, paper-based voting methods which either replaced or supplemented existing machines. To this day, election systems in some states still run on vulnerable post-HAVA technology.[5] In the meanwhile, proposals to adopt new insecure election technologies continue to crop up regularly and gain considerable traction.[6]

More recently, the issue of election security has regrettably been complicated, and sensationalized, by prominent unfounded claims of election rigging and widespread fraud in the 2020 presidential election, including by the outgoing president himself.[7] Baseless claims of election fraud should, of course, be treated and dismissed as such—as I discuss in more detail later.[8] At the same time, the prevalence of unfounded claims must not obscure the need to redress serious security concerns founded on scientific evidence; that is the focus of this Article.

To date, litigation and legal theories around insecure election infrastructure have been sparse and uncoordinated. Scattered lawsuits challenging insecure election technology have put forward an assortment of legal theories with bases ranging from equal protection to state administrative provisions; they have seen mixed success.[9] Most recently, Georgia courts have considered a series of constitutional challenges to the state’s use of outdated and insecure voting machines, in which the courts recognized plaintiffs’ standing and indicated promisingly that courts may be open to granting injunctive relief; but courts have yet to clarify what constitutional doctrines properly apply to such challenges.[10] Legal academic commentary on the topic has been rarer yet,[11] and none has provided a comprehensive view of constitutional or legislative approaches to election infrastructure security.

This Article is the first to propose a unified constitutional theory of election system security, and the first to lay out a legislative approach based on an integrated view of the technological state of the art in election security and systems security. I develop a constitutional analysis of insecure election systems that comports with theories raised, but not elaborated or disambiguated, by scattered case law, and I conclude that election practices that fall egregiously short of a minimal threshold of security are likely unconstitutional under existing voting rights doctrines. Then I argue that, to bolster election security beyond the constitutional baseline, Congress should enact a statute providing federal standards and resources for securing election systems. In the traditionally highly decentralized domain of election administration, this legislation would provide a framework for state and local election officials to continue to manage and secure election infrastructure locally while drawing on federal funding and resources.

This Article proceeds in six parts. Parts I, II, and III offer historical, technical, and legal background, respectively. Part I overviews HAVA’s history and aftermath, voting technology and security improvements since HAVA, and relevant politics of election security today. Part II provides background on election systems and a technical overview of the problem of securing election infrastructure, drawing in depth upon the computer science literature on election security. It presents a novel formulation of election system security in terms of three requirements (“casting, counting, and checking”), which succinctly captures key considerations for election law. Part III overviews the relevant election law, including constitutional right-to-vote doctrines and election administration legislation.

Parts IV and V then develop my constitutional analysis and legislative proposals, and Part VI addresses potential concerns about the ideas in Parts IV and V. Part IV considers how the constitutional right to vote implies a right to vote securely. It analyzes whether providing insecure election infrastructure amounts to a constitutional violation under existing voting-rights doctrines. The analysis concludes that the use of sufficiently insecure election systems can (1) unconstitutionally burden voting rights and (2) unconstitutionally cause arbitrary and disparate treatment of similarly situated voters. However, for a variety of reasons, constitutional litigation is a poor vehicle for realizing robust election security. Part V thus sets out a legislative approach that can provide a more reliable foundation for election security, proposing detailed measures that new federal election legislation should include to enhance election security beyond baseline constitutional guarantees. It particularly focuses on transparency and auditability measures to ensure robust security when modern digital technology is built into critical election infrastructure. Part VI then considers several potential concerns that might be raised in response to the preceding ideas and discusses: (1) the importance of promoting access and security as complementary, not opposing, values; (2) unfounded speculations of election fraud, such as those promoted by Donald J. Trump supporters in 2020, and how they are readily distinguishable from legitimate challenges to insecure election infrastructure; and (3) the need for technical, legal, and political approaches to address the problem of election insecurity and lack of public confidence in elections.

I. Historical Background

HAVA[12] was passed in the wake of the controversial election of 2000. Among other things, HAVA responded to concerns about the reliability of election technology raised in the contested Florida presidential race.[13] By the official tally, George W. Bush won Florida by just 537 votes among six million cast,[14] bringing a national spotlight to certain unreliable features (such as “hanging chads”)[15] of the punch card ballot technology then used. This unreliability had been well-documented for over a decade,[16] but no mitigations had been made, perhaps because no election in memory had been close enough for the ballots’ unreliability to cast serious doubt on the outcome. But Florida in 2000 was that close, and the presidency was at stake.

The parties rushed to court, and the Supreme Court’s Bush v. Gore[17] decision meant the original tally was certified without completing a recount. Long story short, Bush became president, and reforming election procedures and technology became a national legislative priority.

HAVA passed two years later and soon afterward led to widespread adoption of DRE voting technology (often touchscreen machines) that was less secure than many of the older systems it replaced.[18] This ill-fated technological reform was due in part to incomplete understanding of the complex security implications of electronic voting systems, alongside legitimate discontent with existing technology.[19] Such post-HAVA complications, along with the fact that no similarly scoped election legislation has been passed in the two decades since, are one indication of the challenges of election system reform—and of the extent to which legislation may be outpaced by technological change.

To this day, election systems in many states run on post-HAVA technology that is vulnerable—and not just to sophisticated, costly attacks. Over decades, research on the security of voting machines and other election equipment has shown a “uniform[] fail[ure] to adequately address important threats against election data and processes.”[20] Incredibly, multiple investigations have found that voting machines and other equipment “are shipped with basic security features disabled”[21] and “fail[] to follow standard and well-known [security] practices,”[22] opening the door to inexpensive, unsophisticated attacks that might be considered the digital equivalent of tampering with ballots in an unlocked, unmonitored place.

Take, for example, Voting Village, an event at a major computer security conference called DEF CON.[23] Voting Village’s findings are dismaying and sadly not novel; the findings reconfirm the kinds of problems that researchers have documented for decades.[24] In 2019, Voting Village participants examined a range of election equipment, most of which were device models in use in more than fifteen states, and all of which were models “currently certified for use in at least one U.S. jurisdiction.”[25] As in preceding years, using surprisingly basic techniques, “participants were able to . . . compromis[e] every . . . device[] in the room in ways that could alter stored vote tallies, change ballots displayed to voters, or alter the internal software that controls the machines.”[26] As an illustration, the participants reprogrammed some machines entirely, modifying them to play video games and to display a popular internet meme called Nyan Cat.[27] While such demonstrations may seem whimsical, some of the same techniques could enable reprogramming to surreptitiously alter how a machine tallies votes in a real election.[28]

The extent of election systems’ reliance on the machines has decreased significantly over the last two decades. Notably, (1) the fully electronic voting machines that were widely used in the 2000s have, in most states, been replaced or at least supplemented by more secure voting methods that provide a paper audit trail,[29] and (2) post-election audits to independently verify machine-generated tallies have become more common, and are even mandated by law in a growing minority of states.[30] Those vulnerable machines that are still in use have, in some states, been retrofitted with ballot printers that make audits possible.[31] Auditing and other election procedures have improved in sophistication and frequency, significantly reducing the likelihood of errors or surreptitious compromise going undetected.[32]

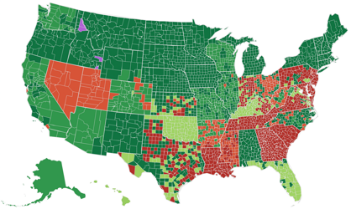

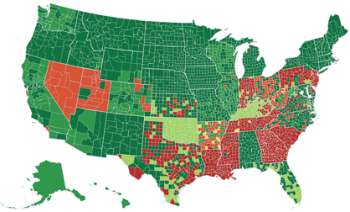

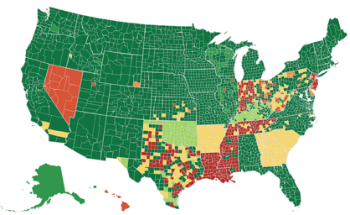

Despite well-known research documenting the serious security flaws of paperless DRE machines in the 2000s,[33] change has been slow and is still ongoing, as summarized in Figure 1. Due to financial and other practical constraints, many vulnerable twenty-year-old machines remain in active, though limited, use, with their security shored up by procedural safeguards (e.g., originally purely electronic machines retrofitted to produce paper records alongside).

The reforms thus far are the fruits of long years of advocacy against vocally resistant opposing interests. Voting machine companies (in an oligopoly market[34]) have been known to brush aside vulnerability reports and threaten security researchers with legal action or attack their motives, rather than fixing problems.[35] Voting machines are expensive for states to buy, upgrade, and replace,[36] and concerns about reputation and voter confidence can create counterproductive pressures to double down on past election management decisions rather than publicizing and implementing costly mitigation of mistakes. The absence of legal obligations to mitigate known election system vulnerabilities further slows the pace of change and tends to result in highly discretionary and localized reform.

Figure 1. Reduction in vulnerable election infrastructure, 2008–2020[37]

| 2008 |  2012 2012 |

|

2016 2016 |

2020 2020 |

Finally, despite the hard-won progress to date, proposals to adopt new insecure election technology—some of which security researchers have been warning against for years—are made regularly and often gain considerable traction.[38] Some have already been used for pilot programs, as well as limited overseas and military voting in federal elections.[39] Such proposals are often buoyed by the relatable promise of modernity, convenience, and cost efficiency, beside which their security risks may initially appear secondary, especially to those without the expertise to assess the risks’ severity firsthand.

* * *

Today, following the controversial elections of 2016 and 2020, election security is once again near the forefront of U.S. public consciousness and has become an increasingly politicized topic.

In recent decades, claims of election insecurity have often been associated with the politically charged issue of limiting access to elections. But as a member of Congress aptly put it, “[e]veryone agrees that we should make it easier to vote . . . and we should make it harder to cheat.”[40] Access and security are not only compatible; neither is meaningful without the other. Achieving both simultaneously is an important and challenging goal for election policy to work toward.[41]

The 2019 release of the nonpartisan Mueller Report, documenting attempted Russian influence on the 2016 U.S. presidential election,[42] cast a new national spotlight on election security. To some, the Mueller Report underscored the strong incentives for sophisticated adversaries to attack the U.S. election system and the importance of strengthening the system’s security in the future. To others, the Mueller Report confirmed that neither the president nor Russia hacked the 2016 presidential election, with the takeaway that the current system is working well.

In 2020, Trump and his supporters instilled a deep distrust of the election system among a significant segment of the electorate leading up to the presidential election. Following the election, they spread unfounded allegations of widespread fraud and claimed that the election was “stolen.”[43] Trump even cited Voting Village and other security research as supposed support for these claims.[44] Trump’s supporters took their claims to courts across the country, which consistently ruled against every one of hundreds of lawsuits based on such allegations.[45]

The Department of Homeland Security (DHS) responded with a public statement that the 2020 election was “the most secure in American history,”[46] which was corroborated with statements of confidence from the Department of Justice, the Federal Bureau of Investigation (FBI), and the Election Assistance Commission (EAC), as well as numerous state and local election officials.[47] These statements, confirming the Republican loss in the 2020 election, came overwhelmingly from Republican officials.[48]

Over fifty prominent security researchers, many of whom have studied and criticized security weaknesses in election equipment for decades, also responded publicly: “We are aware of alarming assertions being made that the 2020 election was ‘rigged’ by exploiting technical vulnerabilities. However, in every case of which we are aware, these claims either have been unsubstantiated or are technically incoherent.”[49] Citing such research to back up claims of fraud fundamentally misunderstands the research, as they explained: “Merely citing the existence of technical flaws does not establish that an attack occurred, much less that it altered an election outcome.”[50]

The recent trend of misinformation about election integrity poses a threat to U.S. democracy that will only be exacerbated by a continued failure to take security flaws in election equipment seriously. The 2020 election was the most secure in history, but some states are still using twenty-year-old machines with known vulnerabilities, some states are not conducting robust post-election audits, and some states are seriously considering once again replacing their outdated election equipment with new technology that is even less secure. Now more than ever, it is important to strengthen our election infrastructure to be robust not only against any fraud that might occur, but also against the alleged levels of widespread fraud that many ardently believe exist. It is furthermore urgent to establish a robust legal framework to provide procedural protections for the security of U.S. election infrastructure into the future and offer legal recourse against too-insecure systems, while systematically distinguishing and dismissing baseless claims of election fraud.

It is difficult at times to separate election security from the turbulent politics engulfing it. But at its core, election security is not a partisan issue; “insecure and unreliable elections threaten everybody, without regard to party or ideology.”[51] In a democracy, it is in all parties’ interests to prevent technological manipulation of elections, to ensure an election’s true winner is the one elected, and to promote public confidence in elections. But even through a cynical lens of pure partisanship where each party’s interest is simply to win, when faced with insecure election infrastructure, all parties should be similarly concerned, as one cannot know whether a hacker’s allegiance will be with one party, the other, or a foreign power. It is in nobody’s interest that the election be decided by whichever gang of hackers prevails.

II. What Is a Secure Election System?

Election system security is a special case of system security.[52] System security “is about building systems to remain dependable in the face of malice, error, or mischance.”[53] Security professionals recognize that no system behaves perfectly at all times;[54] thus, a secure system is one that behaves reliably as intended—not always, but as much of the time as reasonably possible, even under unexpected circumstances or when subjected to adversarial attacks. If and when a secure system fails, it should do so detectably so that the people who depend on it are not lulled into false complacency even though something has gone wrong, and so that the results of the failure can be treated appropriately (and ideally, redressed).

In any particular context, “robust security design requires that the . . . goals [(i.e., intended behavior and failure modes)] are made explicit.”[55] What does this mean for elections? An election is “a process in which [eligible] people vote to choose a person or group . . . to hold an official position,”[56] or to choose a decision to be taken. The specifics of voter eligibility and methods of choice among candidates (or outcomes) will vary from jurisdiction to jurisdiction, office to office, and election to election. As such, election security is a procedural property. A secure election is one in which the applicable substantive rules[57]—whatever they are—are accurately and verifiably followed. The basic intended behavior of an election system is to aggregate the electoral preferences of eligible voters and produce a list of elected candidates (or decisions) as determined by those preferences according to applicable substantive rules, and a secure election system is one that either performs this function or fails detectably.

What does it take for an election system to be secure, as described above? There is no standard and comprehensive definition of election system security, covering all parts of an election system, that is collected in one authoritative place.[58] However, there is a core set of concepts to which research, policy, legislative, and media discourse about election system security consistently refers. I propose a three-part characterization of election system security, incorporating these core concepts.

A secure election system must provide reliable guarantees that (1) every eligible voter, and nobody else, has a meaningful opportunity to cast exactly one vote for the outcome of their true preference; (2) the reported election outcome accurately reflects the votes cast by eligible voters;[59] and (3) in case, for whatever reason, the preceding two requirements are not met, the system clearly and reliably produces evidence of its failure, checkable by all interested parties (i.e., the electorate). The third guarantee implies credible public assurance of the preceding two guarantees by ensuring that any errors or tampering will be publicly evidenced.[60]

I call these the casting guarantee, the counting guarantee, and the checking guarantee. The three-part characterization of election security as casting, counting, and checking is an oversimplification, but it is a useful one that succinctly captures the key elements of election system security that election law is generally concerned with. It comports with existing scholarship and policy statements, including those with more technical detail than my definition offers,[61] and implies well-established requirements such as ballot secrecy (as detailed below).[62]

Next, Section II.A overviews the many parts of an election system. Section II.B describes the casting-counting-checking framework in more detail. Section II.C explains the security properties of traditional paper-ballot-based election systems in each of the three aspects. Section II.D explains some key points where introducing complex modern technology into election infrastructure may create new security risks not present in traditional paper-based systems, and on the other hand, notable areas where new technology promises to enhance security. Section II.E then describes how paper ballots can provide strong security guarantees, even in machine-tallied election systems. Finally, Section II.F overviews key differences between potential risk, realized risk, and magnitude of risk from security vulnerabilities.

A. What Is an Election System?

The term “election system” (or “election infrastructure”) encompasses a broad range of infrastructure that is used in the operation of elections, starting from voter identification and registration all the way to reporting of election results and post-election auditing: “storage facilities, polling places, and centralized vote tabulations locations used to support the election process, . . . technology to include voter registration databases, voting machines, and other systems to manage the election process and report and display results on behalf of state and local governments.”[63] For our purposes, election infrastructure comprises all the “systems [that] collect, process, and store data related to all aspects of election administration,”[64] and procedures associated with the use of those systems. In the United States, tallying is completed by machine except when special circumstances call for a hand recount.[65]

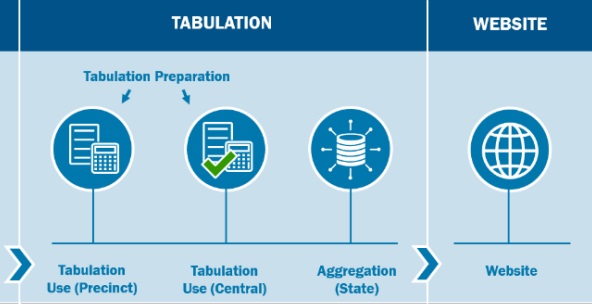

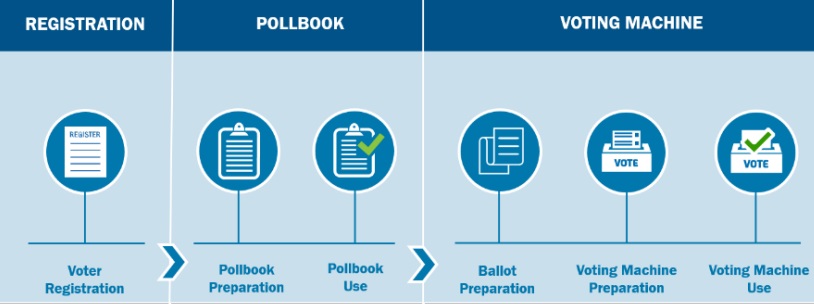

Figure 2. Functional overview of a U.S. election ecosystem (not comprehensive)[66]

Figure 2. Functional overview of a U.S. election ecosystem (not comprehensive)[66]

In 2017, the DHS designated election systems as critical infrastructure, calling them “vital to our national interests” and noting that “cyber attacks on this country are becoming more sophisticated, and bad cyber actors—ranging from nation states, cyber criminals and hacktivists—are becoming more . . . dangerous.”[67]

Figure 2 shows a “functional overview” of an election process in the United States, designed by the Cybersecurity & Infrastructure Security Agency (CISA) which encompasses many (but not all) components of election systems. The most informative conceptualization of election systems varies by context. For voters, for example, most activity is on election day; but for election administrators, election day activity is but a small part of a much longer process.[68] Many different types of technology as well as human processes are involved in election systems.

B. Casting-Counting-Checking Framework of Election System Security and the CIA Triad

Often, security is broken down into three essential components using the acronym “CIA,” which stands for confidentiality, integrity, and availability.[69] CISA summarizes the CIA triad as follows: confidentiality attacks involve unauthorized “theft of information”; integrity attacks involve unauthorized “changing of either the information within or the functionality of a system”; and availability attacks involve “the disruption or denial of the use of the system.”[70]

By considering what CIA requirements are necessary to effectuate the casting, counting, and checking guarantees specific to election systems, we can identify more concrete operational requirements that the systems must satisfy to be considered secure. Next, I explain how analyzing the casting-counting-checking framework with the CIA triad in mind yields a range of concrete properties widely considered important for election security.

Casting and confidentiality. Consider ballot secrecy, for example, a confidentiality guarantee considered essential in modern elections. Why is it considered so important to keep secret how people vote? After all, much information involved in the election process is deliberately made public for transparency reasons.

The U.S. Supreme Court put it this way: “A widespread and time-tested consensus demonstrates that [ballot secrecy] is necessary in order to serve . . . compelling interests in preventing voter intimidation and election fraud.”[71] Election security scholars in computer science (including myself) have summarized it like this: “Protecting ballot secrecy provides a strong and simple protection against coercion and vote selling: if you cannot be sure how anyone else voted, this removes your incentive to pay them or threaten them to vote the way you’d like.”[72] And, corroborating this from a historical perspective, election law scholars have noted that “[b]ribery of voters was far and away the greatest impediment to the integrity of elections before the introduction of the secret ballot, a fact well known not only to historians but to readers of great 19th century fiction.”[73]

As such, the secret ballot is an indirect yet crucial consequence of the casting guarantee’s requirement that voters must have a meaningful opportunity to cast exactly one vote for the outcome of their true preference. Without ballot secrecy, voters could be coerced or persuaded to cast votes for an outcome that does not correspond to their true preference—a serious threat to the legitimacy of a democratic election. Many states impose other confidentiality requirements in addition to ballot secrecy (e.g., on voter information or partial tallies).

Casting. To ensure that only eligible voters can cast a vote, and that all eligible voters can cast a vote, voter registration information must be kept up-to-date and protected from unauthorized modification. To ensure that nobody can vote more than once in an election, there must be a reliable way of checking whether someone has already voted. And to ensure that recorded votes express the voter’s intention, voters must have an opportunity to check their ballot and verify its contents before casting.

Ensuring that all eligible voters have a meaningful opportunity to cast a vote requires that the means of voting must be accessible to all eligible voters with low cost and effort throughout the allowed voting period. As previously noted, accessibility is sometimes seen as a separate issue from security, or even portrayed as in tension with security.[74] But in fact, the availability prong of the standard CIA triad means that ensuring accessibility for all intended users—even in the face of adversarial attacks—is a fundamental goal of securing any system, including election systems.

The availability requirement is unusually challenging for election systems because (1) the group of people intended to access the system (i.e., the electorate) is highly diverse, and (2) concurrent confidentiality and integrity requirements in elections mean that ensuring secrecy and independence for every individual voter is paramount. An election system must allow all voters—regardless of education, technological proficiency, disability, or other characteristic—to cast a secret ballot, durably recorded with just as credible a guarantee of being correctly counted in the election outcome as any other voter’s ballot.

Counting. A similar analysis of the counting guarantee yields additional requirements for election security. For example, the counting process must ensure that the preferences indicated on cast ballots are aggregated accurately (integrity), and the counting infrastructure must remain functional throughout the election (availability).

Checking. Finally, in case any of the preceding casting and counting requirements fail, the checking guarantee requires a publicly verifiable indication of failure. For example, if some ballots are lost or altered—due to human error, natural disaster, adversarial attack, or something else—the system must indicate the loss. This enables correction if adequate evidence is available or, in the worst case, allows for the election to be rerun—an undesirable eventuality that is nevertheless preferable to (perhaps unknowingly) accepting an incorrect outcome. For computer-based systems, this means ensuring that any computerized processes “show their work” in independently human-checkable form, thus providing evidence of their correct functioning (or evidence of any problems that occurred)[75]—a principle termed “software independence” in the election security literature in computer science.[76] The idea is that “you never want to be in a position where you have to say, ‘[The result is right just] because the computer says so!’”[77]

As described by CISA, “[e]very state has voting system safeguards to ensure each ballot cast in the election can be correctly counted” (casting) as well as “laws and processes to verify vote tallies before results are officially certified” (counting), including “robust chain-of-custody procedures, auditable logs, and canvass processes.”[78] Furthermore, the use of paper records “allow[s] for tabulation audits [(i.e., checking tabulated values by inspecting the original voter-verified paper ballots)] to be conducted from the paper record in the event any issues emerge” (checking).[79] Finally, most stages of the election process (except, of course, the marking of the secret ballot by voters) are subject to observation—by bipartisan representatives, nonprofits, NGOs, and the public—as a transparency measure “to add an additional layer of verification.”[80]

* * *

Current systems do not achieve perfection on casting, counting, or checking. Designing a perfect system is out of the reach of current human knowledge and will likely remain out of reach for the foreseeable future due to human fallibility.[81] How well, then, must each of the guarantees described above be satisfied? The kind of assurance considered adequate to support election outcomes as legitimate—as evidenced by broad acceptance, even if reluctantly, of the outcome across a given society—has changed over time, depending on societal context and norms as well as what is within the reach of contemporary system design. Such changes consistently intend to shift toward stronger security guarantees. Available alternatives are significant; a system that might once have been adequate may no longer be considered acceptable once a practical alternative with superior security guarantees is established. Next, I provide two illustrations of the evolution of societal expectations about secure election conduct in rather different contexts.

The history of the secret ballot provides one informative illustration. “For the first 50 years of American elections, . . . those with the right to vote (only white men at the time) went to the local courthouse and publicly cast their vote out loud” after “swear[ing] on a Bible that they were who they said they were and that they hadn’t already voted.”[82] The difference between then and now illustrates the extent of norm shifts over the centuries as well as the fallacy of opposing measures to strengthen election security on the grounds that the current system seems to function passably—a rationale as valid then as now. Paper ballots were first used in the nineteenth century, and government-printed, anonymous paper ballots (pioneered by the Australians in 1858) were first adopted in the late 1800s. Voting machines became popular in the United States from the early 1900s: first, mechanical lever machines, then punch cards and optical-scan technology, then touchscreen and other DRE voting machines, and most recently a shift back toward machines that (unlike lever or DRE machines) produce voter-verifiable paper records. Each of these transitions in voting technology were accompanied by concerns about the preceding technologies’ reliability and the promise—whether or not borne out—that the new technology would improve election integrity.

The history of Black suffrage in the United States provides another illustration. Even after Black Americans’ right to vote gained constitutional protection in the Fifteenth Amendment,[83] many serious barriers to access remained. Many eligible voters still did not have a meaningful opportunity to vote due to hostilities ranging from disguised and state-sponsored tactics, such as literacy tests, to overt but less official tactics, such as threats, violence, murders, and other forms of intimidation at the polls.[84] Over time and after much advocacy, many such barriers became more widely recognized by judges and legislators as racially discriminatory and unacceptable, and new constitutional jurisprudence as well as legislation (e.g., the Voting Rights Act) were developed to improve the security of elections against such access barriers with varying success.[85] While the situation has improved enormously since the Fifteenth Amendment’s passage, voter suppression persists today, and the process of improving access to elections for all eligible voters is an ongoing one, with growing public attention and better data about access barriers driving modern concerns and proposals for improvement.[86]

C. Security Properties of Traditional Paper-Ballot-Based Systems

Let us consider an old-fashioned paper-ballot-based election system with ballot secrecy, say, circa 1900. Traditional paper-ballot-based election systems provide remarkably robust support for the casting, counting, and checking guarantees in several respects—perhaps surprisingly, so much so that the state of the art in digital paperless technology is still unable to provide comparable guarantees in these respects.[87]

What, then, are the key security properties of an old-fashioned paper-ballot-based voting system? Voters can straightforwardly verify that the contents of their ballot match their intentions, at the time of casting, if they mark them by hand and then drop them in a box. Individual physical voting booths help ensure secrecy at the time of ballot marking, in a manner unparalleled by any remote voting method.[88] Tampering with ballots after they have been cast is difficult to achieve undetected if the ballot box is under continuous supervision and observation for the duration of the election. Tampering with paper ballots at scale is even more difficult, requiring human labor and risk of detection roughly in proportion to the number of ballots tampered.[89] Hand counting of paper ballots can be protected against human error as well as malice by having teams of multiple people count each ballot. Hand or machine counting of paper ballots can be protected against error and malice by post-election audits that cross-reference hand-inspected paper ballots. And, as CISA emphasizes, in case of any problem or dispute, there is an authoritative record of durable paper ballots to go back to and to recount if necessary.

However, paper ballots hand-marked in the traditional way have significant accessibility limitations, failing to provide a meaningful opportunity to vote to certain groups of eligible voters. Traditional hand marking of paper ballots is simply not a possibility for many voters with disabilities, as well as illiterate voters.[90] The groups that would be rendered unable to vote unassisted (and thus denied a secret ballot) by requiring hand-marked paper ballots make up a significant percentage of the United States population—currently available statistics do not yield a precise number, but it is at least 4 percent, and likely larger.[91] In many non-election-related situations, the accessibility of a service can be augmented by adding an alternative accessible way of using the service, but such solutions are often unsuitable for the election context; they are likely to violate important security guarantees (the secret ballot) and federal laws about accessibility[92] as well as undermine the independence and dignity of voters with disabilities. While important progress has been made in accessible voting system design in recent decades, building voting systems that provide commensurate integrity and verifiability guarantees to those offered by paper-based systems as described above—but for all eligible voters, including those who cannot hand mark paper ballots—remains a pressing and challenging question in election security.[93]

For our purposes, a traditional paper-ballot-based system provides an informative case study given that more than a century of history has established the security guarantees of a paper-based secret ballot as a baseline to improve upon. Today’s election systems should provide at least comparable or better security guarantees to be considered adequate.

D. Benefits and Security Risks of Modern Technologies in Election Systems

Modern technology can and does play a role in many parts of today’s election systems. The introduction of new technology has led to many significant improvements in security over the years, for example, in voter registration, results reporting, and accessibility.

Statewide electronic voter registration databases and electronic pollbooks have greatly streamlined the efficiency and reliability of managing voter registration information. Digitally cross-checking voter registration information against other electronic databases—such as driver registration records, post office records, and other state voter databases—has also improved the accuracy and timely update of voter information.[94] Of course, security is critical in managing voter registration information; while modern registration systems following security best practices have great benefits, multiple poorly secured voter databases have suffered attacks,[95] underscoring the need for security expertise and training for all election infrastructure.

Technology has also long played a key role in streamlining election results reporting ever since the telegraph’s first use in 1848 to quickly transmit vote counts from coast to coast, a massive improvement from relying on transmission of results “from distant precincts on horseback, carriage, or train.”[96] Today, radio, television, and the internet have further transformed the way election night reporting is done. Security in results reporting is essential too; the EAC has issued guidance on recommended security practices.[97] State certification of official election results (following media reporting of unofficial election results) is yet another step with separate requirements to reporting.[98]

Accessibility is a third area where modern technology has opened new possibilities. Voters unable to use traditional paper ballots used to be required to compromise their independence and ballot secrecy by asking another person to fill out and cast their ballot for them—and hoping that their instructions would be faithfully followed.[99] In recent decades, new technologies have enabled some of these voters to independently cast secret ballots, although much progress remains to be made.[100] Such technologies gained prominence after 2002 due to HAVA’s accessibility provisions.[101] Some of these technologies entirely replace voter-verifiable paper records with unverifiable alternatives. Unfortunately, research since HAVA has established that such approaches entail serious security flaws.[102] However, other technologies for accessible vote casting, such as ballot-marking devices—that is, devices that allow a voter to mark a paper record whose contents the voter can verify before casting—show promise in improving accessibility while also preserving more of the strong security properties of traditional paper-based systems.[103] Furthermore, such new technologies may provide usability benefits for all voters,[104] for example by flagging possible mistakes such as not marking any candidate for president.[105]

* * *

It may seem remarkable that the state of the art in modern technology does not provide techniques to achieve similarly strong integrity and verifiability guarantees when replacing paper-ballot-based systems with technology that does not create a voter-verifiable paper evidence trail. Part of the challenge is that modern technology is often susceptible to hard-to-detect attacks that can be executed remotely. More complexity can actually be a drawback here, as it makes detection harder and presents more opportunities for attacks, especially at a large scale. Another part of the challenge is more basic: modern software engineering has not figured out how to build computer systems free of “bugs” or errors.[106] While we are able to build remarkably complex, apparently functioning computer-based systems, they are constantly tweaked and refined to remove bugs as they are discovered in a process that is always ongoing and never considered complete.[107] Seemingly security-critical systems such as electronic banking actually often fail, in ways that are hidden behind the scenes, and are designed in the anticipation of paying the costs of failure in monetary terms (e.g., through insurance).[108] Of course, insurance cannot provide a solution for election insecurity; the requirements of a democratic election cannot be satisfied by simply paying off the people whose votes were not counted.

Concretely, let us contrast with the security properties of paper ballots as discussed in Sections II.C and II.E. Individual physical voting booths provide a much weaker guarantee of secrecy if the voter is using technology that might, if wrongly configured or compromised, be accessible from outside the booth or store cast votes with identifying information. Voters cannot verify the contents of an electronic ballot at the time of casting as it is not practicable for them to inspect the actual electronic information being saved or transmitted out of the voting machine—and any human-readable representation of the ballot they see might not match with the actual information being electronically transmitted if the machine is wrongly configured or compromised.[109] Undetected tampering with electronic ballots after they have been cast may be possible if the ballot storage equipment is wrongly configured or compromised, and continuous observation of the equipment is unlikely to be able to detect sophisticated attacks. Tampering with electronic ballots at scale is often as little effort as tampering with just a few ballots, if the storage equipment is wrongly configured or compromised. Consider, for example, that editing a single cell in a spreadsheet is as quick as editing a whole column at once. The risk of detection for someone attempting ballot tampering can also be much lower due to the preceding factors, among others. Hand inspection or counting of purely electronic ballots is not practicable, as noted above. Thus, machine counting of paper ballots cannot be verified by post-election audits that cross-reference hand-inspected ballots. In case of a problem or dispute, the only record to go back to is electronic, a form that is not directly human-readable and is much more susceptible to tampering than paper-based alternatives.

These types of security weaknesses are exactly those underlying many of the vulnerabilities discovered and demonstrated in security researchers’ reports over the years.[110] Many of the egregious examples from those reports—for example, paperless voting machines that allow tampering by anyone with sufficient know-how who has physical access to the machine for a few minutes[111]—can be considered neither to provide a meaningful opportunity to cast a vote (casting), nor to provide a strong guarantee that the reported election outcome is consistent with the votes actually cast (counting), nor to provide credible public assurance of a correct outcome by ensuring detection of errors (checking).

E. How Paper Ballots Can Enhance the Security of a Machine Count

In the United States today, even where paper ballots are used, tallying is performed by machine, except in special circumstances calling for a hand recount.[112] Sometimes tallying is done by scanning ballots and then processing the output of the scan (e.g., digital ballot images) using tabulation software that interprets the scan output and tallies the interpreted results. Sometimes—in the so-called “DRE with VVPAT” model[113]—tallying is performed electronically by DRE voting machines based on information stored electronically in the machines when voters use them to vote; but there is still a human-readable paper ballot. The paper ballots are printed when the voter is ready to cast their vote on the machine. The paper should reflect the voter’s electronic (e.g., touchscreen) selections, and the voter can and should review the paper before the final act of casting.

How can paper ballots provide additional security if the tallying is done by machine and the election outcome is determined from the machine tally? This is a natural question—and indeed, misinterpretations of paper ballots’ security properties have led some to claim that the paper’s purpose is just to “comfort” voters.[114] Such claims are false. In fact, paper ballots provide a strong guarantee of the correctness of an election outcome based on a machine tally when accompanied by post-election audits. Remarkably, such audits generally need not manually examine all or nearly all ballots to achieve high confidence.

Post-election audits may use various approaches to check whether an election was conducted properly.[115] Some traditional kinds of post-election audits have been routinely performed and legally required for decades.[116] A newer type of audit, called a risk-limiting audit (RLA), offers a statistical check on the correctness of a reported election outcome far more efficiently than previous methods.[117] RLAs work by manually reexamining some ballots in order to confirm that the votes observed are statistically consistent with the reported election outcome.[118] An RLA differs from a manual recount in that the RLA aims to corroborate the reported election outcome while manually examining just a small sample of ballots, far fewer than all ballots cast. However, if the initial steps of an RLA reveal a potential inconsistency, it may be necessary to examine more ballots or do a full recount in order to confirm the reported election outcome.[119] Crucially, RLAs “can efficiently establish high confidence in the correctness of election outcomes—even if the equipment used to cast, collect, and tabulate ballots to produce the initial reported outcome is faulty.”[120]

That said, even absent post-election audits, paper ballots provide a smaller but still significant security benefit over paperless systems: they provide a voter-verified record to reference in case of a dispute or recount request. Without paper records, no meaningful recount is possible; the machine will “simply spit out the same tally as before.”[121]

Paper ballots provide security benefits only if used correctly, however. The voter must have the opportunity to inspect the record and to rectify it before casting the ballot in case it contains errors (i.e., votes different from the voter’s intent)[122]—otherwise, a machine could simply print out a record consistent with its reported tally, regardless of voters’ intent. Moreover, paper ballots only provide a strong guarantee of correctness in conjunction with routine post-election audits. The paper ballots can only reveal errors in the election outcome if they are checked against the tally—and without post-election audits, that would likely only happen in case of a dispute or recount, instead of routinely after each election. If the paper is never referenced, then it will not have any effect.

In summary, paper ballots can offer greatly enhanced security to machine-tallied elections by strengthening the checking guarantee. The move from paperless electronic machines to paper-ballot or VVPAT-based voting systems in most states, and the increase in the quality and frequency of post-election auditing in many states, have been the key features of the improvement in election system security over the last decade and a half.

F. Potential Risk, Realized Risk, and Magnitude of Risk (or Vulnerabilities and Exploitation)

It is important to distinguish the concepts of potential risk, realized risk, and magnitude of risk arising from security weaknesses in systems.

When someone discovers and describes a security weakness, they have documented the existence of a potential risk to the system. In the security community, this is called a vulnerability. If someone takes their knowledge of a vulnerability and uses it to damage the confidentiality, integrity, or availability of a nonexperimental system, they have realized that potential risk. In the security community, this is called exploitation; a specific method for exploiting the vulnerability is called an exploit.

Confusingly, the term attack can be used to describe either vulnerabilities or exploits. A vulnerability is essentially the description of an attack; an exploit is the attack executed on a real system.

Understanding and disseminating information about vulnerabilities is considered an essential part of building secure systems. Since systems are imperfect, we strive to learn about their vulnerabilities in order to understand how to mitigate them and thus better secure the systems in future[123] (however, deliberate exploitation is not a standard part of the research or development process). A preemptive approach to systems security is critical for building resilient systems, given that exploits can be unexpected and hard to detect and can cause ongoing surreptitious damage until a mitigation is deployed[124]—a point of importance for Part IV’s legal theory, which promotes preemptive redress.

The likelihood that a vulnerability will be exploited—that is, that a potential security risk will be realized—depends on many factors.[125] Technical factors include the nature of specialized knowledge, equipment, network access; credentials required to perform the corresponding exploit; and what kinds of hardware and software are susceptible. Essentially, a technical analysis examines how hard it would be to perform the exploit. But the likelihood, or precise magnitude of risk, that a vulnerability will be exploited depends on many additional nontechnical factors: economic, sociological, anthropological, political, and more.[126] As such, any quantification of the likelihood of exploitation will inherently contain far larger uncertainty than a purely technical analysis of a vulnerability’s severity.

In the computer security community, methods of assessing and describing the severity of vulnerabilities often focus on the technical question of how difficult exploitation would be.[127] Often, vulnerability research provides only a technical analysis of severity, without reaching nontechnical factors or probability of exploitation (and rightly so, where the researcher’s expertise is only technical). Especially for severe vulnerabilities in high-stakes situations, mitigation efforts may proceed immediately based on such technical analysis, without (or before) estimating the precise likelihood of exploitation.

* * *

Vulnerabilities and exploits appear closely related. As such, the concepts are sometimes conflated in popular perception. A prominent and unfortunate recent example comes from Trump and some of his supporters’ claims that the 2020 election was “stolen” and involved “massive fraud,” where they cited reputable security research in supposed support of their allegations.[128] Security researchers were swift to rebut and underscore the difference between potential and realized risk:

The presence of security weaknesses in election infrastructure does not by itself tell us that any election has actually been compromised. Technical, physical, and procedural safeguards complicate the task of maliciously exploiting election systems, as does monitoring of likely adversaries by law enforcement and the intelligence community. Altering an election outcome involves more than simply the existence of a technical vulnerability.[129]

In other words, the reference to reputable security research to support such fraud claims is like citing reputable research showing that a certain kind of front door lock can be picked with a hairpin (i.e., a vulnerability) to prove an allegation that millions of houses in carefully guarded gated communities were broken into by lockpicking last year, and millions of valuables were stolen (i.e., exploitation at a massive scale). The causality is just not there, even if many of the houses in question used those faulty locks. Of course, that does not mean there is no need to fix the locks. The flaws in the locks are real, they pose a real threat to safety, and they should be fixed as soon as possible. At the same time, claims of massive break-ins and theft are not credible absent specific evidence of the same. They are all the less credible in a context with extensive procedural security measures beyond the flawed technology itself, such as security guards and surveillance cameras in the case of a housing complex or chain-of-custody monitoring and post-election auditing in the case of elections.

III. Legal Background

This Part reviews the law potentially applicable to securing election infrastructure and election administration. Election management in the United States is highly decentralized: local officials bear most of the responsibility for conducting elections, so large variations in election systems can occur even within a single state.[130] Federal involvement in election administration is limited, and most decisions are made at the state or local levels.

Next, Section III.A describes relevant constitutional doctrines, and Section III.B discusses statutory requirements on election administration.

A. The Constitutional Right to Vote

The U.S. Constitution does not explicitly enumerate a right to vote, but instead implies the existence of such a right through its amendments prohibiting abridgment of that right on the basis of race,[131] sex,[132] or payment of a poll tax[133] for anyone over eighteen years of age.[134] The Supreme Court has “repeatedly recognized that all qualified voters have a constitutionally protected right to vote, and to have their votes counted,”[135] and has long described the right to vote as “fundamental” and “preservative of all rights.”[136]

Moreover, “the right to vote is the right to participate in an electoral process that is necessarily structured to maintain the integrity of the democratic system.”[137] Other dicta in Supreme Court voting rights cases reinforce this perspective, for example, by emphasizing the importance of “public confidence in the integrity of the electoral process” for “citizen participation in the democratic process.”[138] Effectively, “the right to vote”—both legally and colloquially—is shorthand for all of the above requirements combined.[139]

There are at least three[140] federal constitutional voting rights doctrines that bear on election security under which a constitutional right to vote securely might naturally arise: (1) the Anderson-Burdick balancing test for burdens on the right to vote, (2) Bush v. Gore’s prohibition of arbitrary and disparate treatment of voters, and (3) vote dilution. State constitutional voting rights may afford additional protection.

Anderson-Burdick: Burdens on the right to vote. Government-imposed burdens on voting rights are unconstitutional under the First and Fourteenth Amendments unless they are adequately justified as furthering an important state interest.[141] Anderson and Burdick involved constitutional challenges to an early filing deadline for independent candidates and a ban on write-in voting, respectively.[142] The Supreme Court deemed the deadline in Anderson to be a severe burden on voting and associational rights[143] and held it unconstitutional,[144] but considered the burden imposed by the ban in Burdick to be “slight” and “very limited” and upheld the ban.[145]

Under the Anderson–Burdick balancing test (sometimes characterized as a “sliding scale”),[146] the level of scrutiny to be applied to a challenge to an election regulation depends on the magnitude of the burden it places on voting rights.[147] Severe burdens call for strict scrutiny, while more limited burdens call for more permissive scrutiny.[148] Any burden, “however slight,”[149] is subject to Anderson–Burdick analysis, meaning that essentially any election regulation is properly treated as a burden.[150]

The Supreme Court more recently applied the Anderson-Burdick framework in Crawford v. Marion County Election Board to a law requiring photographic identification to vote. The opinions in Crawford indicated significant divergence in the Justices’ views on the fact-specific application of the balancing test. Lower court decisions applying Anderson-Burdick in other contexts underscore its highly fact-dependent nature. For example, regulations requiring documentary proof of eligibility to vote were upheld and struck down in different contexts,[151] as were provisions limiting early voting opportunities, even by the same Court of Appeals.[152]

Bush v. Gore: Arbitrary and disparate treatment of voters. The highly publicized case of Bush v. Gore came in the aftermath of the closely contested presidential election of 2000. At issue were Florida rules for manually evaluating the intent of the voter on ballots not clearly enough marked to be machine-read.[153] The Court determined that “[t]he want of [specific rules designed to ensure uniform treatment] ha[d] led to the unequal evaluation of ballots in various respects,” and further emphasizing that “[t]he formulation of uniform rules to determine intent” from ballot markings “is practicable,” the Court held that such rules were constitutionally “necessary” and Florida’s system was therefore unconstitutional.[154]

The Court held in Bush v. Gore that the Equal Protection Clause prohibits “arbitrary and disparate treatment” by a state toward “the members of its electorate,” because “nonarbitrary treatment of voters [is] necessary to secure the fundamental right[ to vote].”[155] This extended the Court’s reasoning in Gray v. Sanders, which had decades earlier held unconstitutional a system that unequally weighted the votes of Georgia voters depending on where they lived.[156] More concretely, a state may not run an election system that, “by . . . arbitrary and disparate treatment, value[s] one person’s vote over that of another” or imposes election procedures that cause an “unequal evaluation of ballots” cast by different voters.[157]

Election practices held unconstitutional under Bush v. Gore’s “arbitrary and disparate treatment” theory include:[158] disqualifying certain types of provisional ballots cast at the wrong location but not others;[159] offering disparate early voting opportunities for military and nonmilitary voters;[160] applying informal, subjective procedures to determine voters’ eligibility to vote when challenged and treating challenges from different parties differently;[161] and deploying multiple voting technologies with different accuracy/error rates.[162] The most apposite precedent is Stewart v. Blackwell, in which the Sixth Circuit held unconstitutional Ohio’s continued use of “antiquated voting equipment” well recognized as “inherently flawed” and likely to disenfranchise “thousands of Ohio voters” when used alongside more modern technology.[163]

Vote dilution. “Vote dilution” refers to diminishing the relative weight of certain voters’ votes compared to others, without preventing them from casting ballots.[164] Election practices that cause vote dilution have been held to be unconstitutional in a number of contexts related to electoral districting: overpopulated districts dilute their residents’ votes (relative to votes from less populated districts); the votes of minority voters who have been “packed” or “cracked” by strategically drawn district boundaries may be diluted; and similarly, partisan gerrymandering may cause dilution of the votes of a group with a particular political preference.[165] The constitutional problem arises when one person’s vote is made to count for less than it ought to, since “the right of suffrage can be denied by a debasement or dilution of the weight of a citizen’s vote just as effectively as by wholly prohibiting the free exercise of the franchise.”[166]

State constitutions. Unlike the federal constitution, almost all state constitutions contain explicit language granting the right to vote,[167] and most state constitutions also guarantee secrecy in voting.[168] “[T]he prevailing norm for most state constitutional adjudication”[169] in right-to-vote and related Equal Protection Clause cases is a lockstep approach in which state courts “simply follow[] federal jurisprudence for the analogous right,” effectively “declaring that state law goes only as far as federal law.”[170] Academic criticism has described the lockstep approach as “often [resulting in] a derogation of citizens’ state constitutional right to vote” because state constitutions “go further than the U.S. Constitution in conferring voting rights.”[171] A few state courts take a more state-focused approach in which courts “giv[e] independent force to state constitutional protections of individual liberties, such as the right to vote,” subject to “the ‘federal floor’ of federal court jurisprudence [in cases where] the state constitution is insufficient.”[172] Further details of state constitutional law are beyond the scope of this Article.

B. Statutory Constraints on Election Administration

Federal statutory requirements. Three federal statutes notably constrain state and local election administration: the Voting Rights Act (VRA), the National Voter Registration Act (NVRA), and HAVA. The VRA deals with features of election administration that could racially discriminate against certain voters.[173] The NVRA aims to promote voter registration by requiring states to support certain registration methods.[174] Insecure election infrastructure could conceivably facilitate violations of the VRA and the NVRA;[175] however, the statutes do not provide direct guidance on how to secure elections.

It is HAVA, “the federal government’s most significant intervention to date in the ‘nuts and bolts’ of election administration,”[176] that bears most directly on election security.

In relevant part,[177] Title III of HAVA introduces certain requirements on states’ administration of federal elections. Regarding election technology, Title III requires that marked ballots are verifiable by voters before casting (and can be corrected if errors are discovered);[178] that voting systems produce an auditable record of cast votes;[179] that there be at least one accessible voting machine for voters with disabilities “that provides the same opportunity for access and participation (including privacy and independence) as for other voters;”[180] and that the error rate of tabulation technology must comply with standards set by the Federal Election Commission.[181] Title III also requires states to make provisional voting available to voters whose registration or eligibility is contested at the polling place[182] and to maintain an authoritative “computerized statewide voter registration list” with “adequate technological security measures to prevent the unauthorized access” as well as provisions to ensure the list is accurate and up to date.[183]

Title I of HAVA authorizes federal funds for improving federal election administration, including the acquisition and upgrade of “voting systems and technology and methods for casting and counting votes.”[184] HAVA’s initial allocation was $650 million, with a provision for additional subsequent appropriations.[185]

Finally, Title II of HAVA sets up the EAC, a new agency charged with overseeing HAVA’s implementation. Notably, the EAC is tasked with “provid[ing] for the testing, certification, decertification, and recertification of voting system hardware and software by accredited laboratories,” in conjunction with the National Institute of Standards and Technology.[186] States may opt to, but are not required to, use this accredited certification.[187] Additionally, Title II has multiple provisions aiming to improve future election administration and technology: Subtitle C provides that the EAC “shall conduct and make . . . public studies regarding . . . the election administration issues . . . with the goal of promoting . . . convenien[ce], accessib[ility], and eas[e of] use . . . [as well as] the most accurate, secure, and expeditious system for voting and tabulating election results.”[188] Subtitle D provides for “grants to assist entities in carrying out research and development to improve the quality, reliability, accuracy, accessibility, affordability, and security of voting equipment, election systems, and voting technology,”[189] and “pilot programs under which new technologies in voting systems and equipment are tested and implemented on a trial basis so that the results of such tests and trials are reported to Congress.”[190]

An important feature of HAVA regarding voting machines is its requirement that all punch card and lever voting machines were to be replaced by the next federal election (then, 2004), although exceptions were permitted for good cause. While it was beneficial to phase out the problematic punch card and lever machines, this initiative unexpectedly backfired in terms of security as the replacement machines were often DRE machines[191] that are now disfavored due to security and auditability concerns.[192] DRE machines were explicitly recommended, and also favored for their accessibility features, in HAVA itself.[193] Subsection IV.B.3 provides further discussion of the evolution of understanding of the security risks of DRE machines over time.

State statutory requirements. State election codes generally specify requirements for voter qualifications, voter registration, nominating candidates, early voting, absentee voting, military voting, appointment and removal of election personnel, districting, what kinds of questions may appear on the ballot, and election-related crimes.[194] State election codes also impose some constraints on ballot design, voting equipment, and polling place setup and management.[195] Within the constraints of state (and federal) law, local officials have broad discretion to make administrative decisions.[196]

Regarding election security, state election codes often specify rules—or specify the body, such as a board of elections, that is authorized to make rules—about ballot secrecy at polling places; adoption, testing, and other technical requirements on voting technology; security of voter registration systems; procedures to identify voters and verify their registration; public observation of election processes; procedures to challenge alleged irregularities in election administration; and any post-election audit requirements.[197] While all state election codes include some such provisions, some states’ codes are less detailed than others and may lack provisions regarding many of the above aspects. Notably, many states require new voting equipment to conform to federal guidelines (which are only advisory unless states choose to adopt some or all of them as mandatory).[198]

IV. A Constitutional Right to Vote Securely

We often conceptualize the act of voting as the act of placing a ballot in a box, but this conception is deceptively simplistic when considering the right to vote. The Supreme Court has “repeatedly recognized”[199] that the Constitution protects not just “the right to put a ballot in a box”[200] but rather the right to cast an “effective[]” vote[201] “for the candidate of one’s choice”[202] that “must be correctly counted and reported.”[203] In the words of leading election law scholars, “Exercising the right to vote effectively requires that voters’ intentions be recorded and counted accurately.”[204] What happens after the ballot goes in the box is just as important as access to the ballot box and the placing of the ballot in the box—in other words, casting a ballot is meaningless if the ballot box is a dumpster on fire.

Most U.S. voting rights litigation to date has focused on practices that disenfranchise voters by preventing them from even casting a ballot. This is unsurprising given a long history of outright “deny[ing] or restrict[ing] the right of suffrage”[205] for particular groups of people, and given that, for centuries, the system of placing a paper ballot in a physical ballot box meant that methods for tampering with ballots after casting were relatively limited and not very scalable.[206] Still, the Supreme Court’s earliest voting rights cases[207]—as well as common sense and common usage of the term “vote”—recognize that casting, counting, and reporting are all essential components of the right to vote.

Today, the relevance of disenfranchisement at the counting and reporting stages—after the ballot goes in the box—has grown dramatically with the introduction of complex technologies for ballot casting, tallying, and reporting. Such technologies introduce the potential for mishaps and misconduct in the tallying process that are much more complex, scalable, and difficult to detect. Disenfranchisement after casting—that is, not counting a ballot towards the eventual election outcome after allowing a voter to cast it—devalues a person’s vote just as much as if they had never cast it and indeed can be more insidious and harder to litigate than denying access to the ballot, as it can be done without the disenfranchised person ever finding out. In light of this, constitutional voting rights jurisprudence needs to develop a more detailed approach to voting rights violations after ballot casting. This Part aims to develop such an approach in the specific context of election system security.

As noted earlier, there are at least three federal constitutional voting rights doctrines under which a constitutional right to vote securely might naturally arise: (1) the Anderson-Burdick balancing test for burdens on the right to vote, (2) Bush v. Gore’s prohibition of arbitrary and disparate treatment of voters, and (3) vote dilution.

A. Insecure Technology As a Burden on Voting Rights Under Anderson-Burdick

Recall that in the Anderson–Burdick balancing test, the level of scrutiny applicable to a challenge to an election regulation depends on the magnitude of the burden it places on voting rights.[208] In practice, essentially any election regulation is treated as a burden on voting rights that triggers the balancing test.[209] The facts of the seminal cases that apply the test make clear that burdens under Anderson-Burdick need not directly encumber the act of casting a vote. Rather, burdens under Anderson-Burdick have been construed broadly to mean any impediment upon the free and effective exercise of the franchise.

Thus, all of the real doctrinal work is done in the balancing—each burden must be justified by relevant and legitimate state interests “sufficiently weighty to justify the limitation.”[210] Whether such burdens are constitutional depends on whether the state adequately justifies them based on legitimate state interests.[211]

1. The Sliding Scale

In more detail, the Anderson-Burdick balancing test requires courts to weigh (1) the burden imposed against (2) the State interests offered as justification and (3) how necessary or narrowly tailored the election regulation is to serve the stated interests.[212]

While “severe” burdens are subject to strict scrutiny—that is, a severely burdensome regulation must be “narrowly drawn to advance a state interest of compelling importance”[213]—the proper treatment of lesser burdens have been less precisely articulated by the Supreme Court. Burdick stated simply that “the State’s important regulatory interests are generally sufficient to justify [reasonable, nondiscriminatory] restrictions.”[214] Burdick’s phrasing appears to establish an intermediate scrutiny requiring “important” state interests. Burdick emphasizes that the state interests asserted were “legitimate” and “sufficient to outweigh the limited burden” imposed by the challenged ban on write-in voting, and that the ban was “a reasonable way of accomplishing [the State’s] goal[s].”[215]

On the other end of the sliding scale, the Sixth Circuit has applied rational-basis review[216] to “minimally burdensome and nondiscriminatory” election regulations,[217] contrasting them with “regulations that impose a more-than-minimal but less-than-severe burden,” which are subject to Burdick and Crawford’s intermediate scrutiny.[218] This Sixth Circuit approach is consistent with Crawford’s lead opinion but diverges from a concurrence that argues that Anderson-Burdick establishes just two scrutiny levels.[219]

2. Insecure Election Technology Is a Burden on the Right to Vote

Mandating the use of insecure election technology qualifies as a burden under Burdick’s “however slight” threshold test.[220] A voter whose vote is deleted, miscounted, or ignored due to a security failure has been completely deprived of their vote, so an insecure election system creates the burden that a voter’s vote is at heightened risk of not being properly counted.